Towards Artificial Intelligent Reasoning

Humans are known to use both intuition and reasoning in their decision-making process.

Intuition is associated with making decisions with the highest expected reward based on past experiences (model-free reinforcement learning), while reasoning is associated with making decisions with the highest expected reward based on representations of the world used to predict outcomes that may or may not have been directly experienced in the past (model-based reinforcement learning).

Both pathways compete for decision making at any given time for any given task and which one dominates is mediated by our own experiences of their relative utility, where utility is a trade-off between rewards derived from making good decisions and complexity of making such decisions. A key benefit of this architecture is that it allows us to quickly and flexibly respond to unexpected changes in rewards and/or transition probabilities, e.g. when our intuition is no longer valid.

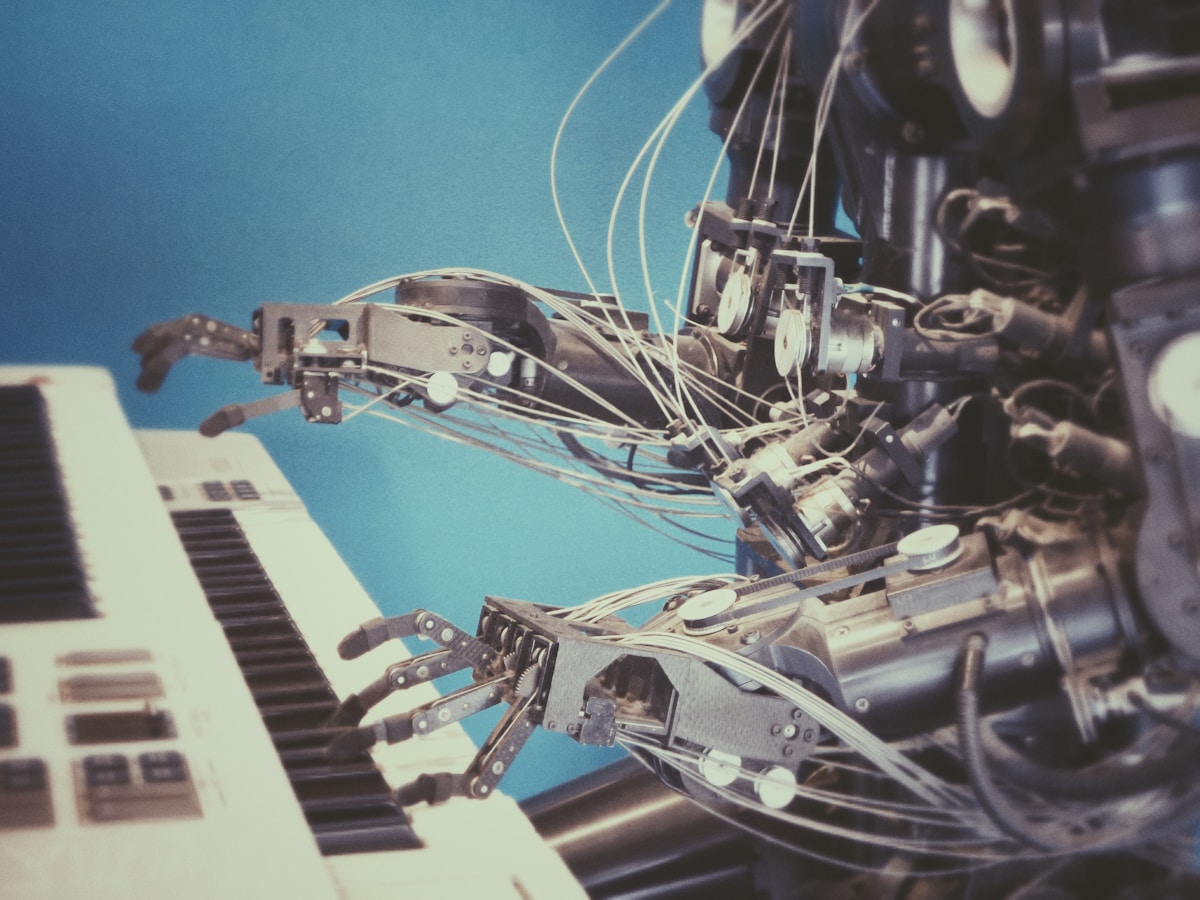

As we collaborate with others, this same mediation process further helps us compare and prioritize endogenous and exogenous learnings in order to maximize the utility of decisions based on our collective knowledge. One can think of this architecture as a blueprint for how to implement collaborative and cooperative learning and reasoning among computer agents.

Whereby each agent consists of a model-free and/or a model-based reinforcement learning process and an uncertainty-based arbitration process that continuously evaluates their relative precision and accuracy. This includes both within and across agents, and determines which one prevails in driving individual and collective decision making.

The mediation process is in essence a multi-objective optimization process.

At the individual level, the optimum solution space consists of a pareto-optimum frontier whereby when computational resources and latency are non-issues, model-based reasoning always dominates. Whereas when computational resources are scarce and speed is critical, model-free intuition dominates (e.g. fight or flight response).

Extending this concept to multiple agents, a solution space can be defined by continuously weighting the utility of each agent based on the “return” and “variance” of their learning models and a pareto-optimum frontier can be derived, much like in an optimally diversified stock portfolio.

In practice, ensemble techniques are commonly used as a mediation process, which largely amounts to sampling and weighting learnings across multiple agents.

While an interesting step forward, this implementation falls short of truly artificial collaborative learning and reasoning because of one key limitation that is the need to establish a shared concept of the problem and the goal at hand across agents.

For an individual agent, that is typically a given, but for a collection of agents, that shared conception has to be constructed and maintained by human intervention. As a result, such attempts have generally focused on near-identical computer agents trained on a common dataset, resulting in a great lack of diversity and therefore a lack of opportunity for unexpected value generation.

What has made human civilization so powerful and successful is largely rooted in our ability as humans to work together to tackle complex tasks despite our own background and individualities.

It is the very fact that we all experience life differently that is often the catalyst to innovative problem solving. Our ability to self-generate a shared conception of a problem and a goal is made possible by three key elements: a common sense, a common language, and a common logic.

A common sense is what allows us to perceive, analyze, and judge reality consistently across individuals and thus to trust each other’s experiences. Moreover, a common language is what allows us to share information and transfer learning about what we know from our own experiences to be associated with certain outcomes.

A common logic is what allows us to integrate learnings from multiple individuals into a cohesive learning model and holistic decision-making process. This includes fair arbitration when individual learnings overlap or conflict with one another.

Interestingly, all three elements are also required when engaging in a natural language conversation, which Turing famously selected as the ultimate test of artificial intelligence.

With these prerequisites in place, it now becomes possible to architect a mediation process that does more than simply weight the recommendations of multiple computer agents and rather automatically combines and hybridizes individual model-free and model-based learnings into a single richer and more powerful decision-making model. A model this is shared across agents, paving the way towards artificial intelligent reasoning.

Many AI leaders, from Michael Jordan to Judea Pearl, have argued that the one essential element missing in machine learning today that could enable a common sense, a common language, and a common logic simultaneously is causality.

To understand why, it is worth revisiting the framework of causal learning.

While most of machine learning today aims to find patterns and interactions between variables that are primarily correlational in nature, causal learning aims to quantify cause and effect interactions between free-to-vary variables and outcome variables.

This classification of the variable types provides a first set of common rules for agents to probe and sense the world in a consistent manner.

In addition, causal learning theory provides a second set of common rules for analyzing data and computing marginal probabilities or expectation values that can be shared across agents with high external validity, unlike conditional probabilities or expectation values generated by agents using non-causal learning models that are generally biased and confounded by the specific data set each agent was trained on.

In other words, causal learning generates knowledge that transcends individual agent experiences and can be aggregated into an abstracted and trusted knowledge repository shared across agents, effectively defining a common language between them.

Finally, causal learning theory provides a common set of tools to quantity and report both the magnitude and the uncertainty around expected outcomes / effects, which allows for fair mediation and conflict resolution between agents based on consistent metrics about the quality (precision and accuracy) of their respective learning, as well as risk-adjusted optimization of collective decision making.

This common framework then allows agents to collaborate, i.e. share their individual knowledge of a common problem to find a better collective optimum, cooperate, i.e. share their individual knowledge of subsets of a problem to find a better global optimum, reason, i.e. share their individual knowledge of distinct problems to solve a more complex problem, and quite possibly innovate together, i.e. apply their individual knowledge of various problem spaces to solve a seemingly unrelated problem.

So, what’s missing?

Both Judea Pearl and Michael Jordan have argued that successfully implementing such a framework is years, if not decades, out.

Arguably, that is less because of theoretical or technical difficulties and more because of a much-needed mindset shift in the field of AI.

Parallel to the 4th industrial revolution and the digitization of everything, the field of neuroscience has seen tremendous development in techniques to monitor and image brain activity, from electroencephalogram to MRIs.

Unlike the way many data scientists who have been treating the mountains of data being passively generated by IoT as ground truth to build models. However, neuroscientists have been using these new sensing technologies as tools, in combination with proven experimental techniques, to uncover the ground truth about how our brains operate. Actively probing the response of the human brain to a variety of external stimuli and, in doing so, have made tremendous progress toward mapping areas of functional specialization in the brain or understanding image processing by the human vision system.

No neuroscientist would think of passively collecting mountains of data from brain waves and attempt to train a neural network on that data in order to generate a data-driven model or “digital twin” of the human brain because, even if enough data were available today. Because it would provide little insight into how the brain actually works due to a lack of interpretability, and worst, it would almost surely result in a model that is fraught with bias and confounds.

The reason behind it is quite simple: randomization is the most fundamental mechanism for generating bias-free causal learning while actions, decisions or stimuli are hardly ever randomized in passively collected data.

This is largely why previous attempts at applying machine learning to critical applications like healthcare have largely failed.

Active machine-learning techniques are too often perceived as being inefficient because of the need to accumulate data over time yet they provide a powerful foundation for driving computer-generated data-driven causal models, provided digitization is used as a tool to collect data in accordance with the requirements of causal learning theory rather than an end in itself.

In conclusion, the amount of data needed to generate such models is in fact orders of magnitude smaller than that needed for passive learning techniques because it is collected in ways that deliver orders of magnitude greater statistical power.

Passive learning can further be used to accelerate learning provided the datasets used have been collected with some level of randomization (e.g. past DOEs).

Emerging new algorithms in Causal Reinforcement Learning, Deep Reinforcement Learning and Deep Causal Learning are paving the way towards computer agents that are capable of causal reasoning, both individually and collectively, and are likely to deliver a true step change in the performance and applicability of Artificial Intelligence in our everyday lives.

Written by Gilles Benoit